Large Language Models: Exploring Capabilities and Limitations

Last updated on December 4th, 2023 at 09:45 pm

Large language models (LLMs) are deep learning algorithms that have seen significant advancement in recent years, particularly in the field of natural language processing (NLP). These models are capable of performing a wide range of tasks, including text generation, translation, and understanding context. Due to their large size and extensive training, LLMs boast an unprecedented ability to process and generate human-like text, contributing to major breakthroughs in the field of artificial intelligence. As a result, they have garnered significant attention from researchers and industry leaders alike.

A core component of modern LLMs is the use of transformer models, which serve as the underlying architecture for these powerful algorithms. Transformers rely on self-attention mechanisms to efficiently process and understand the relationships between various elements within a given text. The training process for LLMs requires massive amounts of data, often sourced from diverse and extensive text corpora. This allows the models to ascribe semantic associations and linguistic patterns from the training dataset, ultimately enabling them to generate coherent text that closely resembles human language.

The rapid development and adoption of LLMs have fostered a competitive landscape among key players, with companies such as Google, OpenAI, and IBM racing to develop increasingly advanced models. These efforts have not only spurred innovation but also raised important questions about the ethical implications, technical challenges, and future potential of large language models in shaping the field of artificial intelligence.

Key Takeaways

- Large language models are transforming natural language processing through advanced deep learning techniques.

- Transformers and self-attention mechanisms form the underlying architecture for modern large language models.

- The competitive landscape among major players in AI is driving innovation and raising important questions about the future of large language models.

Foundational Concepts

What Is a Large Language Model (LLM)?

A Large Language Model (LLM) is a type of deep learning model designed to work with language, specifically for Natural Language Processing (NLP) tasks. These models learn from vast amounts of text data to predict and generate plausible language. A well-known example of an LLM is the Transformer architecture, which has become a driving force behind many recent AI innovations1. The main components of a Transformer include an encoder and a decoder, which work together to process and generate text. A key feature of Transformers is the attention mechanism that selectively focuses on specific parts of the input to improve the overall understanding of the text2.

Evolution of Neural Network Architectures

The development of LLMs has its roots in the advancements of neural networks for NLP tasks. Below is a brief overview of the evolution of neural network architectures for NLP:

-

Recurrent Neural Networks (RNNs): RNNs were one of the first neural network architectures used in NLP. They are capable of processing sequences of data by maintaining a hidden state that can capture information from past inputs. However, RNNs suffer from the vanishing gradient problem, which makes it difficult to capture long-range dependencies in text3.

-

Long Short-Term Memory (LSTM) Networks: LSTMs, a type of RNN, were developed to address the vanishing gradient problem. These networks include a gating mechanism that allows them to store and retrieve information over longer time spans4.

-

Gated Recurrent Unit (GRU) Networks: GRUs, another variation of RNNs, combine properties of RNNs and LSTMs to simplify the architecture while maintaining the ability to capture long-range dependencies. They use a gating mechanism similar to LSTMs, but with fewer parameters5.

-

Convolutional Neural Networks (CNNs): CNNs, originally designed for image processing, were adapted for NLP tasks by applying convolutions on word embeddings to capture local features and dependencies in the text. However, CNNs have a limited receptive field and may not capture global context effectively6.

-

Transformers: Transformers were introduced as a novel neural network architecture for NLP tasks, addressing some limitations of RNNs, LSTMs, GRUs, and CNNs. They rely on self-attention mechanisms to process and generate sequences efficiently while capturing both local and global context7.

Neural networks have come a long way in enabling Large Language Models to perform complex NLP tasks with remarkable efficiency. The continual advancements in neural networks and deep learning models set the stage for even more sophisticated language understanding and generation capabilities in the future.

Footnotes

Core Components of LLMs

Parameters and Their Significance

Large Language Models (LLMs) such as GPT are powered by millions or even billions of parameters. These parameters are essentially the weights and biases within the model, which help in fine-tuning the model’s performance. The higher the number of parameters, the more capable the model becomes in handling complex tasks, learning patterns, and generating contextually relevant outputs.

In LLMs, there are generally two types of parameters: input parameters and output parameters. Input parameters include embeddings that convert tokens into vector representations, while output parameters are responsible for generating predictions. The parameter-count significantly impacts the model’s computational resources and training time.

The Transformer Model

The Transformer Model is a foundational architecture behind modern LLMs. It was introduced by Vaswani et al. in 2017 and revolutionized the field of artificial intelligence, specifically natural language processing. This model effectively captures long-range dependencies and context in text, which previously posed challenges for traditional recurrent neural networks (RNNs) and long short-term memory (LSTM) models.

The Transformer model consists of two main parts:

- Encoder: Processes and represents the input text in a continuous space which the model can understand.

- Decoder: Generates the output based on the encoder’s representation, often used in sequence-to-sequence tasks.

These components consist of stacked layers of self-attention and feedforward neural networks.

Attention Mechanism Explained

The core feature of the Transformer model is its distinctive attention mechanism. The attention mechanism allows the model to weigh the importance of different words in a given context. In LLMs, this mechanism helps identify the most relevant words in context while eliminating the less significant ones.

There are three types of attention within the mechanism:

- Self-attention: Focuses on relating different positions of the input text.

- Encoder-decoder attention: Helps the decoder to focus on useful parts of the input sequence.

- Multi-head attention: Allows the model to jointly attend to different contexts using multiple heads.

Benefiting from this attention mechanism, the Transformer model has enabled LLMs to handle more complex tasks and generate human-like text output, pushing the boundaries in artificial intelligence research.

Training Large Language Models

Data Gathering and Processing

When training large language models (LLMs), it is essential to gather and process massive amounts of text data. These datasets typically range from hundreds of gigabytes to terabytes in size and consist of diverse sources such as websites, books, and articles, as seen in models like OpenAI’s GPT-3. To prepare the data for training, researchers perform preprocessing steps, including:

- Tokenization: Splitting the text into meaningful units (words, subwords, or characters)

- Cleaning: Removing irrelevant content, such as stop words and special characters

- Normalization: Converting text into a standard format, like lowercasing all letters

Unsupervised and Supervised Learning

Training LLMs usually involves a combination of unsupervised and supervised learning techniques. Unsupervised learning, such as the use of transformer models, allows the model to predict the probability of sequences in the dataset without explicit supervision. This method enables the model to learn patterns and relationships in the language.

After the unsupervised training, models often undergo fine-tuning using supervised learning tasks. This process includes providing labeled data to the model to optimize its performance for specific tasks. For instance, LLMs can be fine-tuned to perform tasks like translation, sentiment analysis, and summarization. Additionally, some models employ reinforcement learning from human feedback for training to further enhance their capabilities.

Challenges in Model Training

Despite numerous successes in LLM training, several challenges continue to emerge:

- Computational resources: LLMs require vast amounts of computational power for training, especially considering their billions of parameters.

- Data quality: Ensuring that the training dataset is diverse, representative, and without biases is crucial for effective LLM performance.

- Environmental impact: With the energy consumption associated with training such complex models, achieving efficiency is becoming more critical for sustainability concerns.

- Ethical concerns: As LLMs can inadvertently learn to generate harmful or biased content, addressing ethics during model training is a major challenge.

Overall, training large language models is a complex process that combines data processing, unsupervised and supervised learning techniques while addressing the associated challenges and limitations.

Key Players and Models in LLM

OpenAI’s GPT Series

OpenAI’s GPT series is one of the most well-known instances of large language models. Their latest version, GPT-3, has received significant attention for its ability to understand, interpret, and generate human-like text. The GPT series has evolved since its initial release, growing in power and size with each iteration, highlighting the potential of transformer-based neural networks.

Google’s Achievements in LLM

Another major player in the LLM landscape is Google. They have developed notable models such as BERT and PALM. The BERT model (Bidirectional Encoder Representations from Transformers) revolutionized natural language processing by providing a deeper understanding of language context and the relationships between words.

PALM, short for Pre-trained AI Language Model, is another significant achievement from Google. This model is designed for a wide range of tasks such as text classification, sentiment analysis, and question answering. Like BERT, it relies on self-attention mechanisms and transformer architectures, which enable it to efficiently work with large-scale data.

Other Significant LLM Projects

Apart from OpenAI and Google, there are several other notable LLM projects worth mentioning. One such project is Meta’s RoBERTa, an LLM that shares architectural similarities with BERT. RoBERTa has demonstrated competitive performance in various natural language processing tasks, such as sentiment analysis and syntax understanding.

Another emerging LLM project is Bloom by Anthropic, a powerful language model designed to process vast volumes of text in diverse tasks. It aims not only to improve the performance and usability of LLMs but also to ensure they are aligned with human values and ethical considerations.

Foundation models are a general term for LLMs used as a base for various tasks. They have become popular due to their reusable nature and widespread applicability, further highlighting the significance of LLM projects in multiple domains.

Applications of LLMs

Natural Language Processing (NLP)

Large Language Models (LLMs) have demonstrated significant capabilities in various Natural Language Processing tasks. They are particularly successful in tackling challenges in translation, sentiment analysis, and summarization. LLMs, like GPT-3, have advanced the state-of-the-art performance in NLP, opening new possibilities for applications and research.

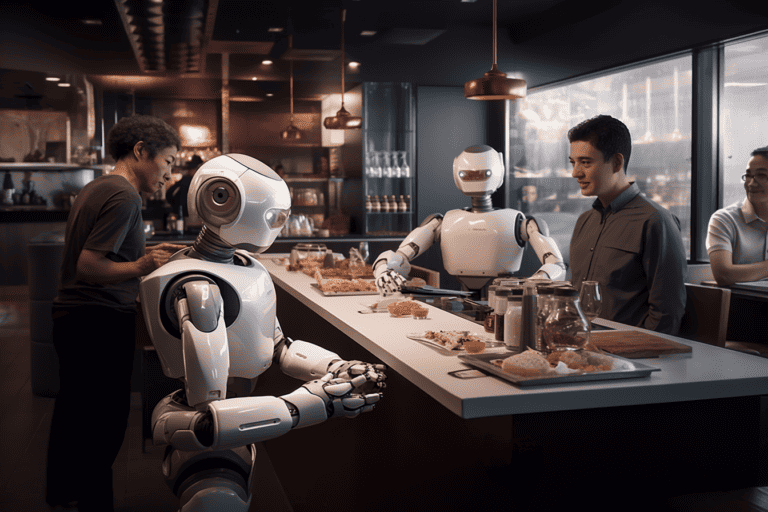

Advances in Conversational AI

LLMs have played a crucial role in the development of Conversational AI, largely improving the performance of chatbots and assistants. By leveraging the power of language understanding and context, modern LLM-based chatbots can provide more accurate and human-like responses in various industries, from customer support to mental health services.

Moreover, they enable the creation of advanced code generation tools, allowing developers to write code in human-readable language while the AI assists by generating relevant code blocks or even complete applications.

Expanding Frontiers: From Text to Images

One of the most remarkable applications of LLMs is their ability to go beyond text-based tasks, extending their reach into the domain of images. Developments like DALL-E showcase the potential of generative AI to create high-quality images from textual descriptions, opening new possibilities for artists, designers, and content creators.

This cross-domain functionality highlights the versatility of LLMs, acting as a foundation for further exploration into the realm of multimodal AI, where models can seamlessly handle and integrate multiple data types, such as text, images, and audio.

The Impact of LLMs

Transforming the Labor Market

Large Language Models (LLMs) have the potential to significantly transform the labor market by automating certain tasks, making existing jobs more efficient, and creating new opportunities. They are particularly useful in fields such as natural language processing and translation1. LLMs can also help professionals in various industries like medicine[^2^] and psychology[^3^], enabling them to analyze and generate data on a massive scale.

Some possible transformations include:

- Automation of routine tasks: LLMs can handle repetitive tasks, allowing workers to focus on more creative and complex problem-solving activities.

- Improved efficiency: With better language understanding, LLMs can speed up processes like document analysis and classification, making professionals more productive.

- New job opportunities: As new use cases for LLMs emerge, professionals in diverse fields may be needed to manage, fine-tune, and maintain these models.

Influencing Search Engines and SEO

The rise of LLMs has the potential to influence search engines and Search Engine Optimization (SEO) practices. Search engines have already started to implement some aspects of LLM technologies, which could lead to changes in how content is indexed and ranked.

Some possible consequences are:

- Improved indexing: LLMs can better understand the meaning and context of content, which might enable search engines to index web pages more effectively.

- Shift in SEO strategies: With better language understanding, search engines may place increased emphasis on the quality and relevance of content over traditional SEO techniques based on keywords and backlinks.

- New tools for marketers: LLMs can help digital marketers develop more advanced tools for content generation, content analysis, and keyword research.

Ethical Considerations and Biases

Despite their transformative potential, LLMs also raise several ethical concerns, particularly regarding biases and security[^4^]. These concerns include:

- Biases: Since LLMs are trained on vast amounts of textual data, they might inadvertently learn and propagate biases present in the data. This could lead to issues like reinforcing stereotypes and promoting harmful content.

- Misinformation: LLM-generated content might contribute to the spread of misinformation, as it becomes more difficult for users to distinguish between human-generated and machine-generated content.

- Security risks: LLMs might be utilized by bad actors to create convincing phishing attacks, fake news, or other malicious content.

Given the potential impact of these ethical considerations, it is crucial for researchers, developers, and policy-makers to work together in addressing these challenges and developing responsible use guidelines for LLMs.

Footnotes

-

[2311.07361] The Impact of Large Language Models on Scientific[^2^]: The future landscape of large language models in medicine[^3^]: Using large language models in psychology[^4^]: The Working Limitations of Large Language Models – MIT Sloan Management ↩

Technical Challenges and Solutions

Computational Resources and Efficiency

One of the primary challenges in training large language models (LLMs) is the demand for computational resources. These models require powerful hardware, such as GPUs and TPUs, to process vast amounts of data during training. As models become larger, the need for resources increases exponentially, which can be prohibitive for researchers and organizations with limited budgets1.

To address this issue, researchers have been exploring various optimization techniques to improve the efficiency of LLMs. Some of these strategies include knowledge distillation2, where the model’s knowledge is compressed into a smaller, more efficient model, and the use of sparsity3 to reduce the number of parameters in the model without sacrificing performance.

Dealing with Data and Model Biases

Another significant challenge in LLMs is their susceptibility to data and model biases that may be present in the training data4. These biases can inadvertently lead to models that generate biased or inappropriate outputs, which could have serious consequences in real-world applications.

To mitigate such biases, researchers have been exploring various methods, including employing diverse datasets during training, using techniques like data augmentation and using bias-reducing pre-processing5. Moreover, they are also developing evaluation metrics and benchmarks to measure and identify these biases in LLMs6.

Innovations in Model Scale-Up

Scaling up LLMs has been a major focus of recent AI research efforts. As models grow larger, they can require significant hardware and security optimizations to ensure smooth operation7. Some of the innovations include hardware-aware optimization, distributed training, and the development of custom accelerators to improve performance and reduce latency.

Furthermore, the AI research community has been actively working on methods to address model transparency, interpretability, and robustness, which are crucial for real-world applications8. Innovations in these areas are expected to further improve the practical utility of LLMs, making them more accessible and effective in various domains.

Footnotes

Methodologies for Leveraging LLMs

Fine-Tuning Techniques

Fine-tuning is a crucial practice in leveraging large language models (LLMs). It involves training a pre-trained model on a specific task or domain with limited data, which enables LLMs to adapt more effectively to a problem at hand. The process often involves adjusting hyperparameters such as learning rate or optimizer to prevent overfitting and improve generalization. There are different fine-tuning strategies, including few-shot learning and in-context learning, which enable LLMs to perform tasks with minimal or no labeled data.

Prompt Engineering and Its Importance

Prompt engineering is another essential aspect of working with LLMs. It refers to the process of designing effective inputs to induce desired outputs from a language model. Given that LLMs can sometimes generate unexpected or undesirable outputs, well-crafted prompts can guide the model towards generating more specific, accurate, and useful answers. In this context, zero-shot prompting refers to using prompts in such a way that the model interprets them without prior exposure to the task. Conversely, few-shot prompting involves providing the model with a set of examples to help it understand the task requirements.

Transfer Learning and Adaptability

Transfer learning, a crucial component in LLMs’ adaptability, allows the model to apply knowledge acquired in one domain to another domain or task, facilitating efficiency and reducing training time. By leveraging transfer learning, LLMs can quickly adapt to new tasks by fine-tuning their existing knowledge, making them more versatile and applicable across a wide range of problems. For example, an LLM trained on general literature could be fine-tuned for a specific domain, such as legal or medical text, while still utilizing some of its previously acquired knowledge.

Overall, methodologies like fine-tuning, prompt engineering, and transfer learning are essential for leveraging LLMs effectively. These techniques enable such models to adapt and generate outputs that match specific requirements, making them valuable assets in numerous real-world applications.

The Future of LLMs

Trends in AI and Language Models

The ongoing advancements in large language models (LLMs) continue to shape the field of natural language processing (NLP). These models, generally based on transformer architectures, excel at generating and understanding human-like text. Recent breakthroughs include OpenAI’s ChatGPT, which set new benchmarks in NLP capabilities.

The future landscape of LLMs in various industries is anticipated to evolve with a focus on the following aspects:

- Multimodal capabilities: Models like OpenAI’s GPT-4 are expected to process multimodal sensory inputs, such as images and sounds, alongside text.

- Customization: Tailoring language models to specific domains, industries, or applications will lead to better performance and relevance in real-world scenarios.

- Efficiency: The training and deployment of large language models may become more efficient and environmentally conscious, reducing their carbon footprint.

Chasing General Intelligence

As LLMs improve at tasks involving NLP, research aims to achieve a higher level of general intelligence in artificial intelligence (AI) systems. Currently, language models excel at pattern-matching tasks within textual data, but they are not adept at forming a deep understanding of the world or possessing common sense.

Integrating AI systems with artificial neural networks can lead to better contextual understanding and cross-domain knowledge transfer. Ideally, future LLMs will be able to seamlessly combine their linguistic abilities with other cognitive skills, bringing them closer to human-like general intelligence.

Developments in AI Regulations

As the capabilities of LLMs advance, the necessity for AI regulations is growing. This implies a coordinated effort from governments, industries, and AI developers to ensure that these powerful tools remain beneficial to society.

Some of the pressing concerns include:

- Responsible AI development: Ensuring that LLMs are designed and deployed ethically, considering privacy concerns and avoiding the amplification of biases.

- Accountability: Defining clear guidelines and responsibilities for developers, users, and organizations working with LLMs.

- Transparency: Ensuring that AI decisions are easily understandable by humans, particularly in critical systems such as healthcare or finance.

In summary, the future of large language models hinges on continuous advancements in AI research and development, aiming at general intelligence and the establishment of robust regulatory frameworks. This will not only increase the usefulness of LLMs but also address the potential risks associated with their rapidly expanding capabilities.

Evaluating LLMs

Testing and Validation Strategies

When working with large language models (LLMs), it’s essential to have effective testing and validation strategies in place. Thorough evaluation ensures that LLMs meet various desirable criteria, such as correctness, efficiency, and robustness in handling language tasks.

One popular testing method is to measure LLMs’ knowledge and capability evaluation, which involves assessing their understanding of factual knowledge, language fluency, and problem-solving skills. An ideal LLM can answer questions, carry out language tasks, and formulate predictions with a high degree of accuracy.

Another crucial aspect is alignment evaluation, which aims to verify if the LLM can align with users’ intentions and value systems as it performs natural language processing (NLP) tasks. This step is key to ensuring that AI assistance offers practical value and remains ethically sound.

Finally, the safety evaluation of LLMs ensures that unintended consequences and risks are minimized. In this process, LLMs undergo testing to check for any bias, discrimination, or harmful language outputs that could be associated with their performance.

Benchmarking Performance

To accurately evaluate LLMs, specific benchmarks are often employed. These benchmarks are standardized NLP tests focusing on various linguistic abilities, such as sentiment analysis, machine translation, and question-answering.

These performance tests allow researchers and developers to effectively compare LLMs, identifying their unique capabilities and limitations. Benchmarking results can inform the AI community about which models excel at specific tasks and motivate innovation to address existing gaps.

Evolving LLM Capabilities

A key aspect of evaluating LLMs is to observe their emergent abilities. These are unexpected and novel capabilities that arise as a result of the knowledge structure and reasoning capacity of the models. Understanding these abilities is crucial for enhancing LLM performance in presenting informed results, generating creative content, and offering practical insights.

As LLMs continue to get better, their capabilities have evolved to tackle increasingly intricate problems. Advanced models display remarkable comprehension abilities and exhibit deeper understanding, which makes them invaluable tools for a wide range of applications.

To sum up, evaluating LLMs allows developers to detect errors, identify strengths and weaknesses, and ensure that these models maintain high standards in terms of knowledge, reasoning, and overall performance. With proper testing and validation procedures, LLMs can continue to provide powerful AI assistance across various domains.

Frequently Asked Questions

How do large language models benefit various industries?

Large language models (LLMs) have a significant impact on different industries, including finance, healthcare, and customer support, by automating various text-related tasks. By understanding human language more effectively, LLMs enable efficiency and improve decision-making processes. For example, in the insurance industry, LLMs help in understanding nuances of various policies and streamline the claims process.

What are the primary use cases for large language models?

LLMs have a wide range of applications, such as sentiment analysis, text summarization, language translation, question-answering, and content generation. They have the capability to handle multiple tasks due to their extensive training on diverse data sets.

Can you outline the history and development of large language models?

The history of large language models dates back to the development of recurrent neural networks (RNNs) and Transformer architectures. Over time, models like BERT, GPT, and ELMo have evolved, becoming more powerful and sophisticated. For a detailed history and understanding of LLMs, this article discusses their capabilities and limitations.

What distinguishes large language models from traditional artificial intelligence?

Traditional AI systems require manual feature engineering and task-specific algorithms, while LLMs like GPT-3.5 learn patterns and structures in human language by training on vast amounts of text data. As a result, LLMs can generalize across various tasks and perform better on natural language processing tasks.

What are some notable examples of large language models currently in use?

Some popular large language models in use today include OpenAI’s GPT-3, Google’s BERT, and Facebook’s RoBERTa. These models have demonstrated their capabilities in various applications, such as conversational AI and text classification.

How does GPT differ from other types of large language models?

GPT, or Generative Pre-trained Transformer, is a large language model designed for natural language understanding tasks. It differs from other LLMs in how it is trained and fine-tuned for specific downstream tasks. While other models like BERT pre-train bidirectionally, GPT uses a unidirectional approach, making it highly suitable for generative tasks like text completion and content generation.